Greetings everyone! 🎉

I am Aditi. And this summer I will be working on the project “OpenWrt Device Pages”. In this blog post I’d like to talk a little about me, my project and my first week!

About me

Hey!!👋

I am Aditi Singh, a Computer Science student from India. I have been studying programming since past 3 years.

After a little exploration, finally, out of so many appealing domains, I found the one for me – Web Development.

I’ve been working on web development for past 6 months. In January, I heard about open source from a college senior. The idea of writing hundreds of lines of code which can be used by thousands of people piqued my interest. The best part being people did it just for their love of coding and contributing to something bigger than themselves. So after a little bit of struggling with my impostor syndrome for a while, I gathered up the courage to start contributing to open source.

Open Source can be a little overwhelming to begin with but then it turned out to be an absolutely amazing experience. Never ever before I’ve had such a supportive and encouraging environment.

Later in March I found out about GSoC, and through GSoC came across – Freifunk. After surfing through hundreds of projects OpenWrt device page was that one idea that sounded fascinating to me and was closely related to my domain.

About OpenWrt

The OpenWrt Project is a Linux operating system targeting embedded devices. Instead of trying to create a single, static firmware, OpenWrt provides a fully writable file-system with package management. It allows you to customise the device through the use of packages to suit any application. Hence, freeing it’s users from application selection and configuration provided by the vendor For developers, OpenWrt is the framework to build an application without having to build a complete firmware around it; for users this means the ability for full customisation, to use the device in ways never envisioned.

About OpenWrt Device Page Project

The main aim of OpenWrt Device Page Project is to create an overview of OpenWrt supported devices to simplify user choice of acquiring new devices. The goal is to evaluate the user needs and plan new device pages based on the user requirements to make it convenient for users to select right devices. Since, OpenWrt has a lot of data it becomes overwhelming for new users to find suitable devices that caters to their needs. So,to simplify the project can be broken down into two major sub-tasks:

- Creation of input form from a

JSON Schemato simplify the process of adding device metadata to the github repository. - After creation of input form, the second step is to render the device pages with search masks, allowing users to search specifically for devices with certain features like USB port, WiFi6 etc.

The first week of GSoC

After the community bonding period, we have been working on creating atomic tasks for the project along with the mentors with weekly milestones. The first task is to create a pretty input form to store device metadata into a github repository.

We have a basic input form ready from the JSON Schema implemented using React JSON Schema form. The RJSF community has been helpful in providing assistance to desired functionality. Upcoming major tasks include, modifying the input form to allow auto-fill and info button functionalities.

Apart from this I had to get an overview of Jekyll/Hugo functionality, understanding how YAML works to help with the project later on!

It has been quite a learning experience and I am looking forward to achieving more amazing things this summer with Freifunk! 🎉

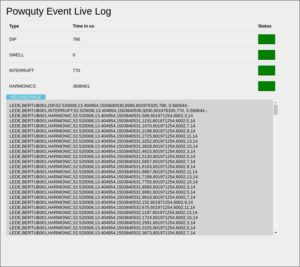

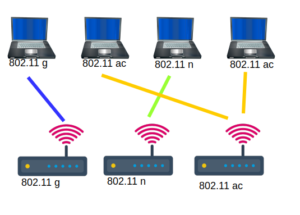

If somebody is interested why I am interested in the capabilities: I want to create a hearing map for every client. I’m building this hearing map using probe request messages. This probe request messages contain information like (rssi, capabilities, ht capabilities, vht capabilities, …). VHT give clients the opportunity to transfer up to 1,750 Gigabits (theoretical…) If you want to select some AP you should consider capabilities… In the normal hostapd configuration you can even set a flag that forbids 802.11b rates. If you are interested what happens if a 802.11b joins your network search for: WiFi performance anomaly. 🙂

If somebody is interested why I am interested in the capabilities: I want to create a hearing map for every client. I’m building this hearing map using probe request messages. This probe request messages contain information like (rssi, capabilities, ht capabilities, vht capabilities, …). VHT give clients the opportunity to transfer up to 1,750 Gigabits (theoretical…) If you want to select some AP you should consider capabilities… In the normal hostapd configuration you can even set a flag that forbids 802.11b rates. If you are interested what happens if a 802.11b joins your network search for: WiFi performance anomaly. 🙂