GSoC 2017 – OpenWifi

Hi, my name is Johannes Wegener and I’ll be working on OpenWifi this Google Summer of Code. I’m 27 years old and study computer engineering at TU Berlin. In this blog post I’m going to explain to you what OpenWifi is and what should be done during this summer of code.

What is OpenWifi?

OpenWifi is a OpenWRT/LEDE configuration management system. It is intended to manage a bigger or smaller amount of OpenWRT/LEDE devices.

If a new access point joins a network it is be able to auto detect the management Server and register to it. After the node has been registered its configuration (static configuration like what you’ll typically find in /etc/config/ on OpenWRT/LEDE devices) will be downloaded by the server and stored in a database.

It is now possible to query and modify aspects of the configuration. It also possible to change values depending on other values. This means configuration changes can be applied to a lot of different routers. There is an older templating system which shall be replaced by a new graph-oriented one. It also manages SSH-Keys, has a rudimentary file upload functionality (for uploading new images for example) and extensible API. It is possible for example for a node to ask for an image it should install. (Currently this is not very dynamic – but it is intended that an image could be selected on various parameters)

The Server also regularly pulls the health-state of the node and displays it in an overview. Furthermore the server acts as a luci2 proxy.

It is also extensible via PlugIns and is able to serve as an entry point to other services like icinga or location services. (Both already have a proof of concept PlugIn available)

How does it work? aka what makes it tick?

The main software is written in python and uses the pyramid framework and sqlalchemy as ORM. It uses pyramid-rpc for json-rpc requests (which is used for nodes to register for example) and cornice for a REST-style API (which is intended for the user of the system). The Core-System can be found in this repository.

Most of the tasks operating on nodes are done by a jobserver that uses celery – so that they don’t block the main thread.

All Webviews have been moved to a different repository and are realised in fact as a PlugIn. (If you just want to manage your nodes on CLI/with scripts this will be possible in the near future!)

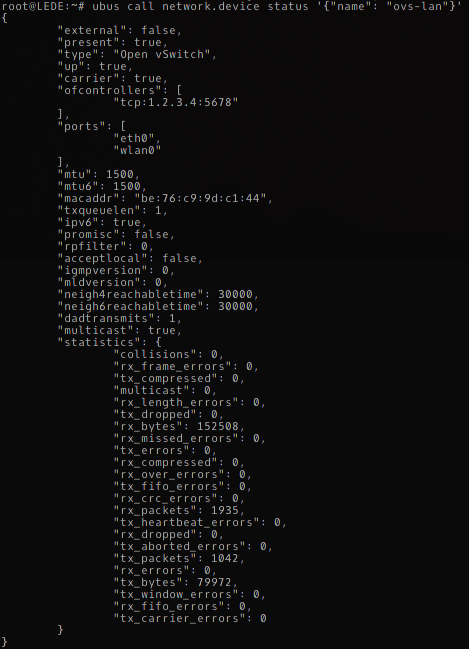

The nodes use a notification script written as a shell script. It uses a fixed DNS entry (openwifi), a configuration file (/etc/config/openwifi) or mdns (using umdns or avahi) to detect a server and register to it. The packages can be found in the openwifi-feed repository. There is also a boot-flasher which is intended for flashing an alix2-style board from ramfs – since it is also possible to execute commands on the node I’ll integrate an update solution that uses sysupgrade.

The communication between the server and the node is realized currently via rpcd. But one goal during this Google Summer of Code is to abstract that and realize it via a rpcd-communication-PlugIn – this would make it possible to also a NetJSON-communication-PlugIn for example.

The PlugIns are realized with python entry points. There are some special named entry points which will extend the main application. You could have a look at the example plugin to get an idea how it works.

How to try it?

You can easily try the software with docker. Navigate to the Docker directory – there three files that will assist you with the setup. In conf.sh you setup if you want to use LDAP for authentication, avahi for mdns announcement and dnsmasq as dhcp server in the docker container.

With build_image.sh you build the docker image. It tries to detect if you need to use sudo for docker or not. Last but not least you need run_image.sh to start the image.

You can easily add PlugIns to the docker image if you just check them out or copy them inside the Plugins directory. To start off I would recommend to add the Web-Views Plugin and use avahi. Now you can navigate your browser to http://localhost:6543 to see the webviews (you need to restart the container after Plugin install – use docker stop OpenWifi and docker start OpenWifi).

What is needs to be done?

In the next section I’ll explain what should be done during Google Summer of Code. I need to prioritize these things because I’m not sure if it is possible to do everything. I think most important things are Testing, Authentication and the new graph-based database model.

Testing-Infrastructure

Currently just very few things have tests. But because it is possible to start a LEDE container in docker (there are some scripts helping to create a LEDE container in the Docker/LEDEImage directory) a lot of things are possible to test with docker orchestration. I want to provide a small LEDE image with the code to test it.

Before adding big new things I like to transfer the development to true test-driven development and having test for at least 90% of the core component code. And after that continuing development by writing tests first.

For that I would also have a look at coverage.py.

Security and authentication

There is a simplistic authorization for OpenWifi with a LDAP backend. I would like to add authorization based on user/password (without LDAP), API-Key and client side certificate (for the nodes to authenticate theirself against the API).

I would like to have the authorization as granular as possible. Authorization should check whether the authorized identity is able to operate on this node and what kind of action is allowed.

Security is provided by TLS. Last week I added the last bits to also use https for client communication – but right now it needs to be setup up by the user. I would like to add the appropriate hooks to the notification shell script.

Expand new graph-based DB-Model

The new DB-Configuration model is based on a graph – where configurations can be connected by links (the link can contain addition information – like what kind of link it is – currently the addition data is the option name (for example if you have a wifi-iface configuration the “device” links it to a wifi-device)).

The model is implemented and there is a query API to get and set options. But for communication purposes the configuration is stored as uci-json-config. There is code to convert between these two (but the one from uci to graph-model needs quite some more intelligence to detect everything right). There are also some hooks that update each part. But the update process is not consistent – the graph-based config gets a new ID for example. It would be nice to have a consistent conversion.

Furthermore I like nodes to share parts of a configuration. This should be easy to implement with some small DB changes. For this consistency is also very important. By default UCI generates unique strings for every configuration – this could be used for consistency.

The DB-Model also currently lacks creating new configurations and reordering configurations.

API

The API to modify nodes should get expanded and everything should get documented. (see above) This should be done in code and a document generated by sphinx.

I also like to implement a CLI program to use the API.

Versioning

The uci-json parser supports diffs between configurations. I would like to save a diff for each change which is applied – to have roll back configurations or delete a specific change.

There is a simplistic revisioning implemented. But the diff should be updated to a class of its own with json import and export and operations to apply a diff to a UCI configuration. (upgrade and downgrade)

Modularity and expandability

OpenWifi is right now is already quite modular – but I also want to modularize configuration parsing and communication. It would also be nice to have some interoperability with OpenWISP.

I use alembic for tracking database changes it would be nice to find a good way to use alembic for database changes needed by PlugIns as well.

Scheduled Updates

I want to schedule config updates – for example if you have a mesh you probably want to update the deepest nested nodes first and than the ones above etc. – this should be easy with celery (as long as the mesh is known and somehow represented in the database).