After three weeks of code here we have the first evaluation!

The first week I started to talk with my mentors of how to guide the project. I started a [repo](https://gitlab.com/jpascualsana/retroshare-python-bot) to code a “bot” that will wrap the Retroshare JSON API for better interaction. But I didn’t continue the job because we are looking a way to wrap the API using Doxygen generation (looking at [https://gist.github.com/sehraf/23cbc8ba076b63634fee0235d74cff4b](@Sehraf work)).

So I get a list of different projects, provided by my mentors, and I started to[write different scripts](https://gitlab.com/jpascualsana/public-datasets-import) to import the data on to Retroshare network. Some of this projects are:

- Wikimedia based projects

- WordPress blogs

- Gutemberg project

- ActivityPub

- RSS

- Radio onda Rossa

- XRCB.cat

- RadioTeca

So this scripts parse in different ways this sites, and get their information as previous step to publish it on to RetroShare network categorized as channels.This scripts are able to:

- Parse the site/project getting all “pages” of interest with different strategies.

- Get updates (the pages that have changed since last time the info has been retrived)

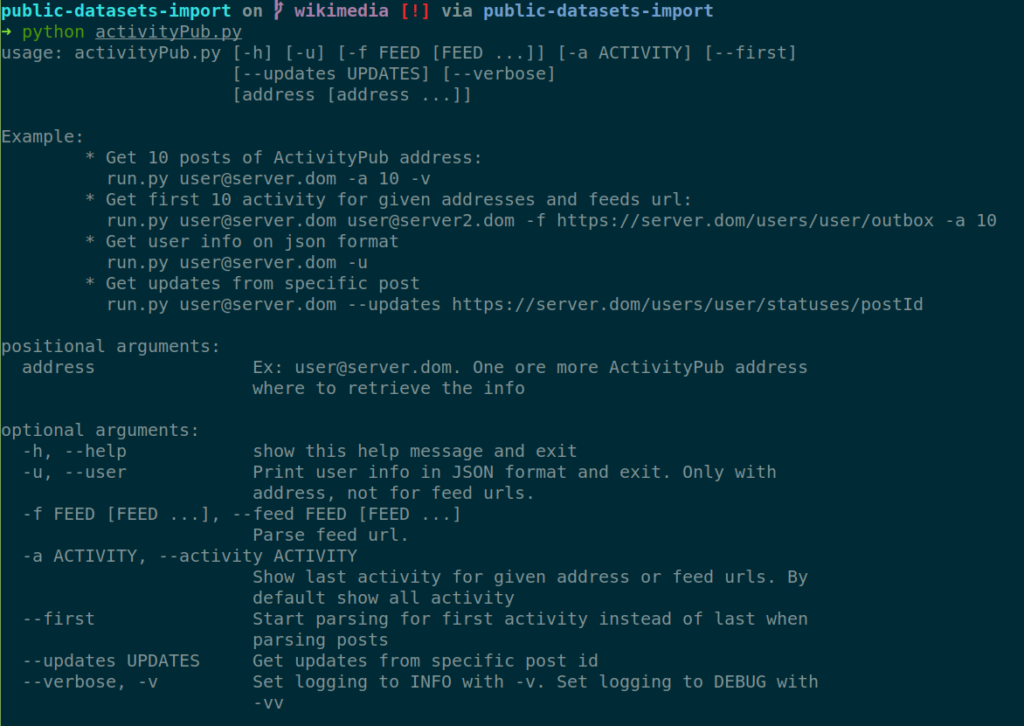

- Command line executable with argument parse. See -h option to get supported options.

So the next step is to use this scripts to import this information on Retroshare network using a wrapper dynamically generated by Doxygen.

On the next screenshot we can see the help for the script that import from ActivityPub

Could be nice something similar for channels and directory sync.