![]()

In the recent weeks i have made quite some improvements on my initial demo application:

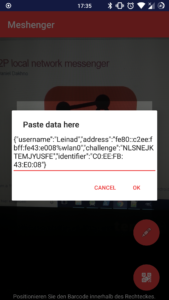

- share information using channels like Telegram instead of a QR-Code

- turn off the screen when the earpiece is held to the ear during a call,

- use IPv6 link-local adress when possible, IPv4 as fallback.

I have jumped into WebRTC development, searched and evaluated different projects with similar goals:

- serverless-webrtc-android

- barebone example of WebRTC without signalling servers

- I ported the code from Kotlin to Java for a better understanding and integration into the project

- webrtc-android-codelab

- loopback example: WebRTC PeerConnection through localhost, providing insight into how to connect two nodes running on the same device

- android-webrtc-tutorial

- insight into how signalling works through an external server

The given examples helped me to collect a knowledge- as well as a codebase which I will further use to implement video and audio transmission over WebRTC.

Evaluation, hacking and testing of those examples helped me to get a understanding of the inner-workings of WebRTC and will surely support me in the Integration of WebRTC into Meshenger.

Here are some screenshots of the current state of the application:

My next step will be the adaptation of said projects to the newest WebRTC-version

as well as further dealing with the fundamentals of WebRTC and finding a way to circumvent a central server.