Previous post: https://blog.freifunk.net/2023/07/08/gsoc23-automation-tools-for-libremesh-firmware-build-and-monitoring-part-2/

Project results

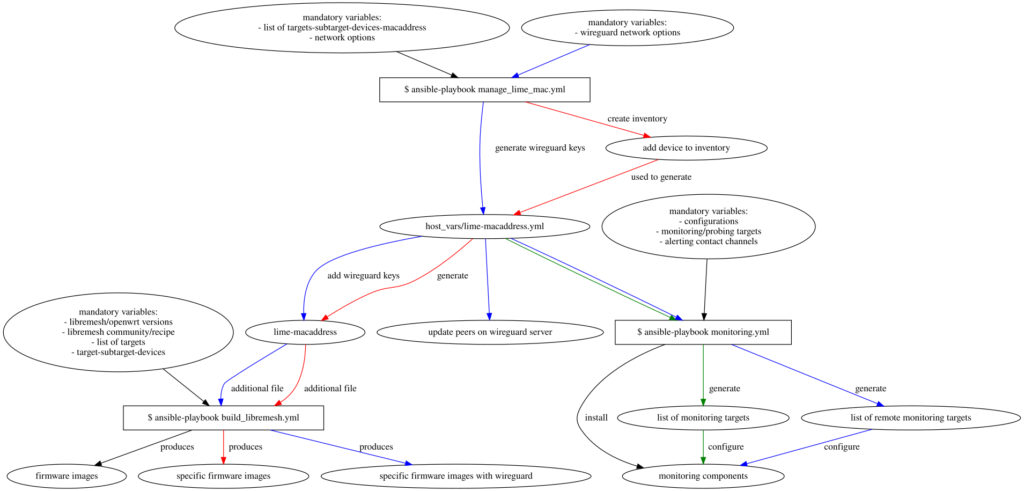

These are the repositories with the produced code, where the first is the main:

https://gitlab.com/a-gave/libremesh-ansible-playbooks

https://gitlab.com/a-gave/libremesh-ansible-collection

https://gitlab.com/a-gave/ansible_openwrt_buildroot

Playbooks and roles to build releases

In this final part other than improving the code, I also used it a lot to prepare a list of firmware images with the new releases of LibreMesh: the v2020.3 based on OpenWrt 19.07.10 and the upcoming new relase v2023.1-rc1 that support the latest OpenWrt 22.03.5.

I extended and tested the automation tools to build for all devices of a defined target/subtarget to make the precompiled firmwares for the latest releases of LibreMesh. The list of target/subtarget is based on the advice of one of the last LibreMesh meeting about the architectures most used, that mainly match also those with a lot of low-cost devices.

Building for all devices has meant to encounter these kind of issues:

- the `default` set of lime-packages doesn’t fit in the factory and/or the sysupgrade image of a device and this cause a build failure.

- as the previous but the devices fail silently without interrupting the build

- multicore/parallel compiling randomly fails

For the first problem, there isn’t in the OpenWrt Buildroot a mechanism to predict the resulting firmware size i.e. based on the list of the selected packages. This seems related to the compression tools used to produce the firmware that could change depending on the devices, and leads to have successful builds for a device with an image size of 7168k while another with the same image size will fail. This means to not be able to predict if the produced LibreMesh firmware will fit in the device memory, and the devices that cause a build failure should be identified singularly.

I take notes of devices that cause a build failure for the selected list of target/subtarget for the mentioned OpenWrt and LibreMesh releases using the default set of LibreMesh packages. Firstly providing a mechanism to exclude the devices that throws an error. This doesn’t mean that the other devices are somehow ‘supported’ but simply that the build doesn’t fail. Since this work of mapping of all devices is still in development it is available at the branch dev of the collection of roles:

- Notes about LibreMesh 2020.3 on OpenWrt 19.07.10: https://gitlab.com/a-gave/libremesh-ansible-collection/-/tree/dev/target/libremesh_2020.3/openwrt_19.07.10?ref_type=heads

- Notes about LibreMesh 2023.1-rc1 on OpenWrt 22.03.5: https://gitlab.com/a-gave/libremesh-ansible-collection/-/tree/dev/target/libremesh_master/openwrt_22.03.5?ref_type=heads

Since the support of LibreMesh for OpenWrt 22.03.5 is still in testing, and the amount of time and space needed to reproduce all the images for the selected architectures may be considerable. I put in place two mechanism:

- having a list of `supported_devices`, that can be used to rebuilt only a sublist of devices, ideally those of people/community who can test them.

- I started to setup a set of docker images for differents OpenWrt targets/subtargets to build with the default packages of LibreMesh, that I briefly explain.

Dockerized buildroot for each target/subtarget

There is a set of Dockerfiles that I’m including in the set of ansible playbooks/roles to speed up the process of building and to save space among different LibreMesh releases based on the same OpenWrt releases.

https://github.com/a-gave/libremesh_openwrt_buildroot_docker

It is thinked to be able to rebuild different versions of LibreMesh with a separated environment but avoiding rebuilding the same OpenWrt tools and toolchain more times. For instance if the build of LibreMesh for all devices of the OpenWrt target ath79/generic takes 1 hour and has a size of 28.8GB, a subsequent builds with minor changes will take around 30 min and increase the size of the produced docker image by only 15GB. In a similar way a docker image with pre-compiled tools, toolchain, pre-extracted kernel and precompiled kmods and packages, that build without specifying a device but only the target/subtarget will take 11.2GB of space but allow to build then an image, that has pre-selected the set of LibreMesh suggested packages, within 4 minutes. This time also depends on the amount of additional packages selected and on the availability of computing resources for the builder machine.

Other contributions

In this period I also contributed to LibreMesh project providing metrics for this analysis of the effect of changing the default distance for long wireless links.

https://github.com/ilario/wifi-distance-setting-exploration

This is a kind of regression for outdoor devices, still unfixed due to two fact:

- neither OpenWrt nor LibreMesh, has an easy way to determine if the device is manufactored to be used indoor (tipically a router) or outdoor (an antenna).

- to improve the performances of routers, a LibreMesh choice was to lower the default distance, but so remain disadvantaged the antennas. This means they are more susceptible to have a broken wireless link (as if it were a cut cable) if the device is accidentally reset and so they require a custom build with different defaults.

Conclusion

A thanks to Ilario and Stefca for having been my mentors, to the organizations of FreiFunk and LibreMesh that made this work possible, and to all folks of OpenWrt and Gluon that contribute to free and open source networks.