During the last few weeks I setup the testbed for the wireless connection testing

framework conTest and brought in some new functions

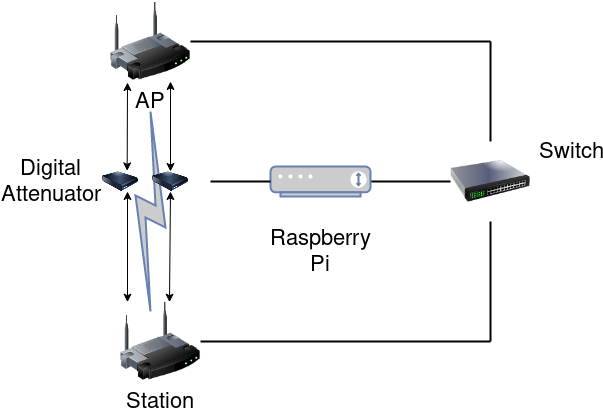

The figure below show the physical setup, followed by a schematic overview.

The user can now specify which files should be collected by conTest.

Currently there is only an overall collection time adjustable by the user. To

ensure everything is captured, half the time interval the most frequently written

file uses should be set. As a next step I will introduce individual file read times,

as this will reduce network, CPU and storage/memory load.

In addition I added functions to monitor the wireless network interfaces and

capture the traffic using tcpdump. The captured output will be written to the

controlling machine directly over the wired network interface.

I started to improve the overall code quality of conTest to have a solid code base.

To reduce overhead I started sharing code between the monitoring part, which can

be separated from the rest of the conTest framework.

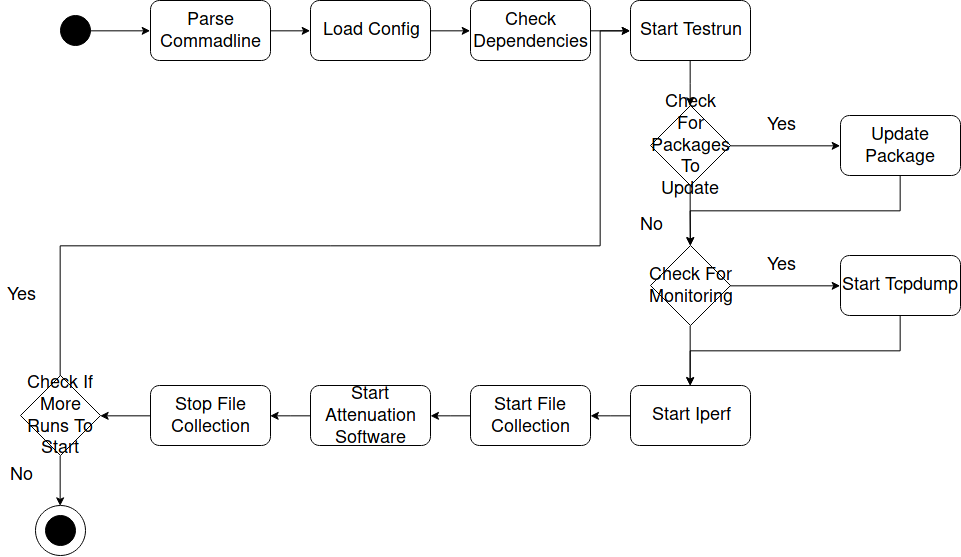

The current reduced flow of conTest can be seen in the figure below. conTest will

check if the necessary dependencies are present, after it processed the command line

arguments and loaded the configuration file. If all dependencies are present, conTest

will start it’s first test run. Before each test run, it will check if there are

packages provided by the user to update and install them. After that the program

will check if the user provided the monitoring flag and start tcpdump accordingly.

Now conTest will start iperf on both sides after killing all running iperf processes.

I’m the next step the parallelized file collection and the attenuator control software

is started. After the attenuator controller returns the software collection

is stopped and the program restarts the loop until the given number of experiments is

finished.

Unfortunately there isn’t anything flashy to show right now. The next steps will

be to create a Makefile and package both applications. Furthermore I will add some

scripts to process the collected data and scripts working on it for data representation.

In addition I will add sane defaults to the config file and add individual file

collection speed.