During this Google Summer of Code, I built visn, a testing framework designed specifically to integration-test Rust projects that rely on eventual consistency.

Originally, I imagined that a full network simulator was needed to thoroughly exercise the qaul.net API, as I discussed in my initial blog post. While sketching out designs for the libqaul API, as seen in this pull request (which influenced the actual service API), I discovered that it made much more sense to simply simulate the events coming into an individual instance. This is simpler to design, easier for developers to use, and less computationally complex.

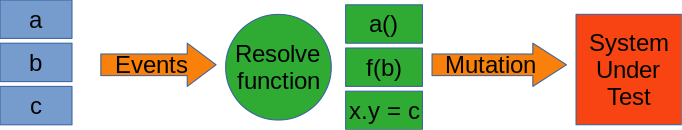

That insight lead to the idea of a generic, easy-to-extend testing framework which could be used to test qaul at multiple levels at once. This framework, discussed in more detail in my second blog post, essential boils down to a mechanism for writing tests in an easy-to-read format which is transformed into calls into the system under test.

While working on this idea, I was also involved in several pull requests which added various capabilities to the libqaul API, including adding the Message struct and designing security and consistency validation steps for messages.

Eventually, my first pull request for visn itself came along. I got a lot of useful feedback and decided that the easiest way to make sure I was writing the right kind of test framework was to actually use it, by implementing features in libqaul and testing them at the same time as I implemented features in visn. This lead to my third blog post, on the value of this approach to what I call “conceptual” testing and my first PR that actually implemented and tested some libqaul features, along with the PR that fixed the issues I mentioned in that blog post.

Around this time, the project moved to a GitLab instance, so my PRs were somewhat split. In addition to a first stab at a contacts book API, which I later revised, I added the most recent visn feature, and in my opinion, one of the most useful: permutation testing.

In essence, rather than simply testing a single order of events, visn now allows testing all possible orderings of a set of events. This is, of course, and O(n!) algorithm, since there are n! orderings of n events, but I was able to do it pretty efficiently with the use of what eventually became the permute crate.

permute uses a data structure, called an ArbitraryTandemControlIterator, that stores both a reference to an array (a “slice”) and an iterator over indices to that array and transparently iterates over references to elements in that array. This way, copying of the array’s elements is kept to an absolute minimum, reducing both computational and memory footprint.

For example, reversing the elements of a vector using an ATCI:

let data = vec![

String::from("red"),

String::from("orange"),

String::from("yellow"),

String::from("green"),

String::from("blue"),

String::from("indigo"),

String::from("violet"),

];

let control = vec![6, 5, 4, 3, 2, 1];

let atci = ArbitraryTandemControlIterator::new(&data, control.clone().into_iter());

for (atci_val, rev_val) in atci.zip(data.iter().rev()) {

// Critically, these are both &std::string::String. No copying occurred.

assert_eq!(atci_val, rev_val);

}Of course, control could just as easily be vec![6, 5, 6, 4, 4, 3, 2, 1] or any other combination of valid indices.

Combined with an implementation of Heap’s algorithm for permuting lists, permute can provide an efficient method of generating all possible orderings in a deterministic way.

With that done, I was able to add a sample all-orders test for some aspects of the libqaul API, and tidy up the internal testing crate so that others can use this work. Once these two merge requests are reviewed and accepted, the visn testing framework and the service_sim crate in the qaul.net tree will be fully able to support ongoing libqual development.

Google Summer of Code with Freifunk has been a wonderful experience during which I’ve worked with cool technologies, amazing peers, and extremely helpful mentors. Overall, I think it’s made me a much better technologist and programmer, and I’d like to wholeheartedly thank Google, Freifunk, and my mentors for the amazing opportunity.